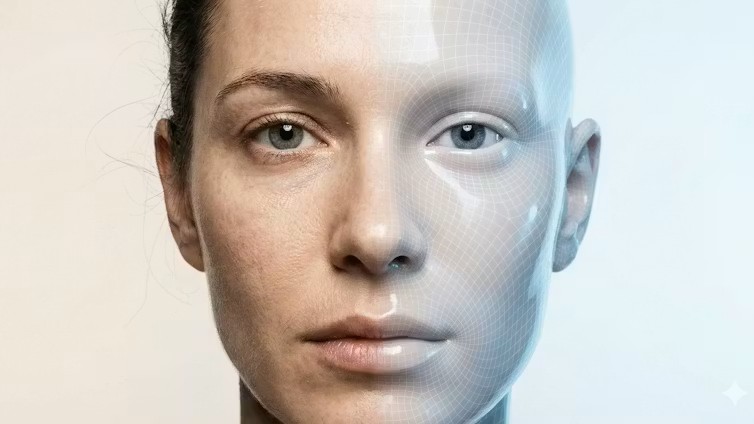

Deepfakes are flooding the internet at an unprecedented rate. Over the course of 2025, AI-generated faces, voices, and full-body performances that mimic real people became dramatically more convincing. These digital creations are not only becoming more realistic but are increasingly used to deceive viewers, from social media posts to video calls, and even cybercrime activities.

As deepfake technology has evolved, its realism has reached a level where it’s difficult for even experts to tell the difference between synthetic media and genuine recordings. Cybersecurity firm DeepStrike estimates that the number of deepfakes online skyrocketed from around 500,000 in 2023 to an astonishing 8 million in 2025, with an annual growth rate nearing 900%. This rapid increase in both quantity and quality of deepfakes is creating significant challenges in the fight against digital deception.

The Shift to Realistic, Accessible Deepfakes

Several technical developments have contributed to the dramatic escalation of deepfake technology. Video realism made significant strides with the introduction of generation models designed to maintain temporal consistency. These models produce videos with coherent motion, realistic identities, and smooth transitions from frame to frame. Gone are the days when flickering, warping, or distortions around the eyes and jawline could reliably indicate a deepfake. The new technology allows for seamless video manipulation, making synthetic faces appear strikingly real.

In parallel, voice cloning has crossed the “indistinguishable threshold.” Just a few seconds of audio can now be used to generate a convincing clone of someone’s voice, complete with natural rhythm, pauses, emotion, and emphasis. This technology is fueling large-scale fraud, with major retailers reporting thousands of AI-generated scam calls daily. Previously detectable synthetic voices have nearly disappeared, further complicating detection efforts.

Anyone Can Create Deepfakes Now

One of the most alarming developments is how accessible deepfake creation has become. Tools like OpenAI’s Sora 2, Google’s Veo 3, and other new startups have lowered the technical barriers to producing deepfakes. Now, anyone with an idea can draft a script using a large language model like ChatGPT or Google’s Gemini, then generate polished, AI-driven media in minutes. The entire process—from scripting to production—can be automated, making it easier than ever to create compelling and harmful content at scale.

This democratization of deepfake creation means that the volume of synthetic media is likely to grow even faster. The surge in deepfakes is a significant concern, especially in a media landscape where content moves rapidly, often before it can be properly verified. From misinformation to scams, the damage is already being done.

The Future: Real-Time Deepfakes

Looking ahead, the trajectory is clear: deepfakes are moving toward real-time generation. In 2026, we can expect to see synthetic media that not only looks like real people but also behaves like them in real time. Models will evolve from generating static, pre-rendered clips to producing interactive, live content—where video call participants, for instance, could be entirely synthesized in real time. These AI-driven actors would be capable of instantly adapting their faces, voices, and mannerisms to any given prompt, making it more challenging than ever to distinguish them from actual people.

The implications of this development are vast. Scammers could use responsive avatars, while digital actors could perform live interactions that mimic human behavior with alarming accuracy. As these capabilities mature, it will become increasingly difficult to detect deepfakes using traditional methods, like analyzing pixels.

The Need for Stronger Detection Methods

With deepfakes evolving so quickly, relying on human judgment alone will no longer be enough to identify them. To combat this growing threat, the focus will need to shift toward infrastructure-level protections. These could include secure media provenance, where content is signed cryptographically to verify its authenticity, and AI-powered tools like the Deepfake-o-Meter, which can analyze multimodal content for signs of manipulation.

As deepfake technology continues to evolve, it will require more sophisticated detection methods, along with increased awareness and vigilance from users, to mitigate its impact. Only through a combination of cutting-edge technology and robust security frameworks will society be able to tackle the rise of synthetic media and protect individuals from its potential harms.