New York lawmakers have passed the RAISE Act, a groundbreaking bill aimed at preventing AI-driven disasters and holding major AI labs accountable. The bill targets frontier AI models—like those from OpenAI, Google, and Anthropic—that could potentially cause catastrophic harm, including mass casualties or economic losses exceeding $1 billion.

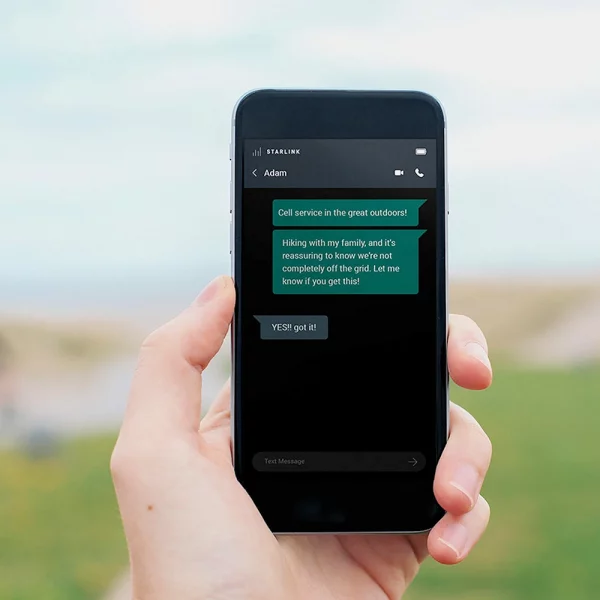

The RAISE Act, which now awaits Governor Kathy Hochul’s signature, mandates transparency reports and incident disclosures from large AI labs. These include scenarios involving AI misuse, erratic model behavior, or cybersecurity breaches. Non-compliance could lead to civil penalties of up to $30 million.

Unlike California’s vetoed SB 1047, New York’s bill was tailored to avoid stifling innovation, particularly among startups and academic researchers. Senator Andrew Gounardes, co-sponsor of the bill, emphasized that it focuses only on AI models trained with over $100 million in computing resources and deployed in New York.

Backed by AI pioneers like Geoffrey Hinton and Yoshua Bengio, the bill represents a win for AI safety advocates. However, it has drawn criticism from Silicon Valley investors and tech firms, with concerns about regulatory overreach and the potential withdrawal of advanced AI models from the New York market.

Assemblymember Alex Bores dismissed those fears, citing New York’s massive GDP as a strong incentive for compliance. The bill has no “kill switch” requirement and exempts post-training developers from liability—key differences from other proposals.New York RAISE Act

The RAISE Act could become the first state-level AI law in the U.S. to demand enforceable safety standards from frontier model developers.